August 2020 – Volume 24, Number 2

Mohammad Bagheri

University of Tehran, Iran

<bagheri65![]() alumni.ut.ac.ir>

alumni.ut.ac.ir>

Abstract

The present study reports the process of developing and validating a self-report questionnaire that can be employed to examine technological pedagogical content knowledge (TPACK) perceptions of Iranian EFL teachers. To conduct the study, a survey instrument consisting of items adapted from two existing TPACK-based survey instruments was generated. The content validity of the items was evaluated by two ELT experts who were experienced in teaching English with technology. The resulting survey was then administered to a group of participants (N = 206), its construct validity was established using Exploratory Factor Analysis (EFA) and Confirmatory Factor Analysis (CFA), and its reliability was calculated using Cronbach’s alpha method. The results of statistical analyses indicated that responding teachers could distinguish six out of seven constructs in the original TPACK framework. Moreover, items associated with the knowledge required for teaching with the Web and the Internet loaded on a separate factor. Therefore, a seven-factor solution comprising of 31 items was proposed, and it was concluded that the constructed survey instrument was a valid and reliable tool for measuring perceived level of technology integration literacy among Iranian English instructors. Implications for validating future TPACK surveys and planning ICT courses in Iran’s EFL settings are discussed.

Keywords: TPACK, Computer-Assisted Language Learning (CALL), technology integration literacy, EFL contexts, Iranian EFL teachers

As a modern approach to pedagogy, Computer-Assisted Instruction (CAI) is an important source of reform and innovation in educational contexts, and has spawned a lot of capabilities for boosting instructional practices and course delivery methods. CAI offers numerous benefits for teachers as well as learners, for instance it provides multimedia content, channels of communication between class members and distant learners, practical exercises and tutorial feedback, and shifts learning context from being teacher-centered to learner-oriented (Chapelle, 2008; Cheung & Slavin, 2012; Doughty & Long, 2003; Li & Cumming, 2001). The diverse range of technological tools available for use in the classroom and the manner in which they are integrated into the curriculum have drastically changed the way different content areas are being taught. In recent decades, the area of foreign language instruction has been seriously affected by the constructive influences of technology-enhanced pedagogy and the potentials of ICT for improving learners’ comprehension and their manipulating the target language (Taylor & Gitsaki, 2004; Warschauer, 1996). The increasing infusion of ICT tools and web technology in language teaching contexts necessitate EFL teachers to understand how to integrate technology into pedagogical practices.

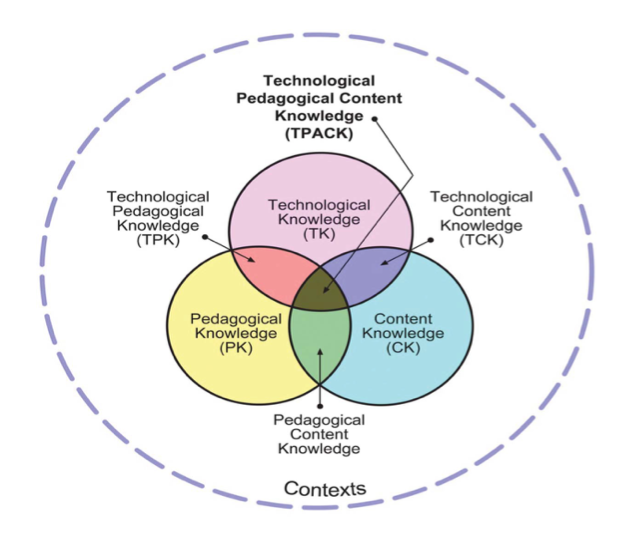

One important framework that has been developed to address the issues relevant to investigating teachers’ competencies to use technology in classrooms and to describe teachers’ body of knowledge required for intelligent pedagogical uses of technology is Technological Pedagogical Content Knowledge (TPACK), which was proposed by Mishra and Koehler (2006). TPACK is anchored upon the idea that when integrating ICT into pedagogy, teachers need to combine three knowledge sources: technological knowledge (TK), pedagogical knowledge (PK), and content knowledge (CK). The interactions between these three knowledge sources create four other sources of knowledge namely technological pedagogical knowledge (TPK), technological content knowledge (TCK), pedagogical content knowledge (PCK), and technological pedagogical content knowledge (TPACK). TPACK has been welcomed by researchers in the field of educational technology because it helps them in exploring various topics associated with technology integration such as teacher knowledge and beliefs, teacher preparation, planning ICT courses, and assessing teachers’ technology literacy (Angeli & Valanides, 2009; Koehler & Mishra, 2005; Kramarski & Michalsky, 2009). Figure 1 depicts TPACK framework and its components.

Figure 1. TPACK Framework (Reproduced by permission of the publisher, © 2012 by tpack.org).

In recent years, Computer-Assisted Language Learning (CALL) has permeated Iran’s EFL classes, and more and more teachers are required to teach in virtual and online environments. A number of researchers (e.g., Dashtestani, 2016; Karimi, 2014) have verified the beneficial effects of using technological tools and CALL materials on Iranian students’ language learning outcomes. Due to the significance of technology integration for teaching foreign languages, a number of other researchers explored Iranian EFL teachers’ CALL literacy. As an instance, Fatemi Jahromi and Salimi (2011) concluded that although Iranian English teachers in high schools believed in positive roles of technology in facilitating students’ learning, they perceived themselves moderately competent in using technological tools and integrating technology into the curriculum. While a number of research studies (e.g., Hedayati & Marandi, 2014; Nami, Marandi, & Sotoudehnama, 2015) investigated Iranian EFL teachers’ attitudes towards CALL and technology literacy, few studies have employed TPACK framework to design a survey instrument to assess Iranian teachers’ knowledge of using technology to teach English as foreign language.

Since a meticulously validated questionnaire for assessing TPACK competency of Iranian EFL teachers is lacking at the present time, the key objective of the present study is to develop and validate such a survey instrument that could measure Iranian English teachers’ perceptions with regards to seven constructs in TPACK framework. More specifically, we adapted items from two existing TPACK survey instruments and attempted to validate it using both EFA and CFA among a sample of participants consisting exclusively of Iranian EFL instructors. Before explaining the empirical procedure of the study, we first describe TPACK framework and its constituents. Subsequently, we introduce a number of questionnaires that have been constructed to measure TPACK in various educational settings.

Review of the Literature

PCK and TPACK

Teaching any subject matter is a complex cognitive undertaking in which the teacher must benefit from various knowledge sources to create the best learning experience for the students (Leinhardt & Greeno, 1986). Accordingly, teachers who have limited and incoherent knowledge about a specific subject matter cannot function as adequately as those who exploit from their differentiated and integrated knowledge (Magnusson, Krajcik, & Borko, 1999). Like any other profession, teaching has a body of knowledge that distinguishes it from other professions. So individuals who possess such knowledge that is represented by specific skills are regarded as true professionals and are empowered to exercise the profession. Shulman (1986) called teachers’ professional knowledge “pedagogical content knowledge (PCK)”. This concept is about how the combination of content and pedagogy creates an understanding on the part of the teachers that assists them in effectively organizing, adapting, and conveying particular aspects of subject matter to students. Since its introduction, PCK has grown to be a special and interesting theoretical concept in literature relevant to teacher education, and researchers (e.g., Cochran, DeRuiter, & King, 1993; Grossman, 1990; Wilson, Shulman, & Richert, 1987) have sought to delineate how this concept relates to teachers’ beliefs and other areas of teachers’ knowledge base, such as general pedagogical knowledge or subject matter knowledge.

To better explain the interactions between content, pedagogy, and technology and to account for what teachers need to know to integrate technology into education, some researchers presented an extended view of PCK that includes an element of technology literacy. Building on Shulman’s idea of PCK, Mishra and Koehler (2006) introduced TPACK as a theoretical framework for understanding teachers’ competencies required for efficient technology integration. They conceptualized TPACK as a synthesized and situated form of knowledge that is grounded on the interactions of subject matter, pedagogy, and technology. Cox and Graham (2009) define TPACK as a teachers’ capability in employing technology for coordinating subject-specific activities with topic-specific representations to facilitate students’ learning. Chai, Koh, and Tsai (2011) argue that ICT courses that aim to foster teacher development for more efficient integration of technology should move away from technocentric perspectives and adopt an approach emphasizing pedagogy and content, and TPACK reflects such a paradigm shift. In recent decade, TPACK has been successfully used to plan and run ICT courses for both pre-service and in-service teachers (see Harris & Hofer, 2011) and to develop survey instruments for measuring teachers’ technology integration competencies. Definition of each TPACK category as well as an example item for each construct appear in table 1.

It should be noted that before Mishra and Koehler (2006) proposed TPACK idea, other researchers had briefly mentioned the integration of technology into PCK construct. Pierson (2001), for instance, mentioned the relationship between the three elements by stating that teachers’ technology integration practices depend on their teaching experience. Gunter and Baumbach (2004) used the term “integration literacy” to discuss the interplay between the three elements. Technology integration, in their opinion, requires a good foundation in computer literacy, information literacy, and integration literacy. Other scholars expressed similar ideas under different names. Angeli and Valanides (2005) introduced ICT-related PCK to refer to necessary skills that educators need to be able to teach with ICT. They employed this concept to introduce an Instructional System Design (ISD) model, which can engage teachers in technology-rich design activities. Electronic PCK was yet another term for describing a specific type of teachers’ expertise for successful technology integration (Franklin, 2004; Irving, 2006).

Measuring TPACK

To date a number of TPACK surveys have been created. Efforts to construct such surveys began by Koehler and Mishra (2005). The 14-item survey they developed aimed at measuring a group of teachers’ perceptions of their understanding of content, pedagogy, and technology over an instructional course during which faculty members and students collaborated to design online educational materials. Although the instrument could track changes in teachers’ TPACK perceptions, it could not be employed in other contexts due to the small sample size (N=12) and highly contextualized nature of the items. Results of their study indicated that while at the beginning of the course participants held simplistic ideas about the nature of online pedagogy, at the end of the program they came to the conclusion that planning for online courses required more time and energy, and that teaching online course is more than translating the content of traditional face-to-face courses into a new medium.

Schmidt et al. (2009) developed the Survey of Preservice Teachers’ Knowledge of Teaching and Technology to examine how preservice teachers used the benefits of technology during teacher education program and in practical courses offered in PK-6 classrooms. To construct the TPACK survey, researchers initially reviewed instruments that were already being used to assess teachers’ technology use in educational settings. Then, three professors with expertise in TPACK were asked to evaluate all collected items in terms of construct and content validity. The instrument was, subsequently, given to 124 pre-service teachers who were preparing to instruct different disciplines, and who were attending a 15-week course aiming to improve their skills in technology use for PK-6 classrooms and learning environments that emphasized integrating ICT tools into all content areas. Researchers of the study reported good reliability for each of the seven TPACK constructs (alpha = .80 and above), but the questionnaire could not be considered as having construct validity because they analyzed each factor individually.

A number of TPACK surveys have been developed to assess technology literacy of specific groups of teachers. Archambault and Crippen (2009) examined the TPACK of 596 K-12 online teachers in the USA using a 24-item questionnaire. To establish the construct validity of the survey instrument, a piloting process in two phases was carried out. The instrument was then emailed to a large number of K-12 online distance educators across the United States. Based on their findings, participants felt that their knowledge associated with technology (TK) was not as strong as their knowledge of pedagogy and content. Another important finding of their study was that there were large correlations between TCK, TPK, and TPACK constructs, and this prompted researchers to call into question the distinctiveness of these three TPACK domains. Referring to the weaknesses of TPACK in terms of precision and heuristic value, they concluded that TPACK might be effective theoretically, but it provides limited practical benefits for teachers, researchers, and administrators.

Instead of employing surveys, some researchers have used other methods to measure teachers’ TPACK. As an instance, Angeli and Valanides (2009) introduced Technology Mapping (MP) as a model to assess teachers’ ICT-TPACK, which is a special strand of TPACK. As a situative methodology, this model evaluates teachers’ technology literacy with regards to five criteria: (a) identification of topics to be taught with technology, (b) identification of representations for transforming content to be taught into forms that are comprehensible to learners and that are difficult to be supported by traditional means, (c) identification of teaching strategies which are difficult or impossible to be implemented with traditional means, (d) selection of appropriate ICT tools and effective pedagogical uses of their affordances, and (e) identification of appropriate strategies for the infusion of technology into classroom. Besides, the researchers used self-assessment, peer assessment, and expert assessment of the design-based performances of participating teachers as formative and summative assessments of teachers’ understanding.

The overlapping nature of the TPACK framework has led some scholars (Archambault & Barnett, 2010; Cox & Graham, 2009; Lee & Tsai, 2010) to raise some points regarding the exact boundaries between its seven constructs. Thus, theoretical studies concerned with validating TPACK survey instruments often report difficulty in isolating all seven components of this framework proposed by Mishra and Koehler (2006). Koh, Chai, and Tsai (2010) examined the TPACK profile of Singaporean pre-service teachers using a large sample of participants (N= 1185). The final model that was developed and validated through CFA yielded 5 factors: technological knowledge (TK), content knowledge (CK), knowledge of pedagogy (KP), knowledge of teaching with technology (KTT), and knowledge from critical reflection (KCR). Koh et al. (2010) argued that since Singaporean teachers responding to the survey were rather inexperienced, they failed to distinguish PK and PCK items, and consequently the items of these two factors loaded on one factor. Further, they attributed participants’ failure to distinguish between TCK, TPK, and TPACK items to the fact that such items were not based upon specific examples of technology integration.

Method

Instruments

In order to develop a survey instrument to measure Iranian EFL teachers’ self-assessment of the seven knowledge domains in TPACK framework, items were adapted from the TPACK questionnaire developed by Koh and Sing (2011), and survey of pre-service teachers’ knowledge of teaching with technology developed by Schmidt et al. (2009) Then, an expert review method was used to establish the content validity of the questionnaire. Following the recommendations put forward by Dillman (2007), two EFL teachers who had considerable experience in teaching online language courses were consulted to express their views about the items incorporated in the initial questionnaire. They were requested to meticulously review the questionnaire and assess to what extent its items were relevant to competencies needed for teaching English with technology. Besides, they were asked to suggest any possible revision(s) that they thought would contribute to the clarification of the items and improvement of the instrument’s capacity in fulfilling its intended purposes.

Based on feedback received from the two reviewers several changes were made in the instrument. First, all the items concerned with CS2 (curriculum subject 2) were excluded from the questionnaire. This change was made because Koh and Sing’s (2011) instrument was designed to target TPACK kevel of Taiwanese and Singaporean teachers who had been trained to instruct two subject matters (CS1 and CS2), but participants in the present study were teaching just one subject (English as a second/foreign language). This resulted in the exclusion of two CK items, two PCK items, and one TPACK item from the questionnaire. Second, the phrase “content of first teaching subject (CS1)” that appeared in a number of items was substituted with the phrase “knowledge of/about English grammar, vocabulary, and pronunciation”. Accordingly, an item like “I have sufficient knowledge about my first teaching subject (CS1)” was changed to “I have sufficient knowledge about English grammar, vocabulary, and pronunciation.” Furthermore, a number of items in pedagogical knowledge (PK) construct were reworded in such a manner that they could better evaluate EFL teachers’ competence in fostering communicative skills in language learners.

Another important change made in the questionnaire was adding a number of items that asked participants about their competency in carrying out web-based pedagogy. In recent decades, Internet and web technology have become important sources of innovation in language classrooms, and many researchers (e.g., Laakkonen, 2011; Pellettieri, 2000; Stanley, 2013) have discussed the potentials of Internet/web in improving cognitive engagement and linguistic ability of language learners. Considering these trends, it was decided to adapt a number of items from the questionnaire developed by Lee and Tsai (2010) and incorporate them in the initial questionnaire. These items evaluated teachers’ confidence in their ability to implement web-based pedagogy and to search the web for various subject-specific material and were included under a new factor called web content knowledge (WCK) (Lee & Tsai, 2010). The initial survey instrument, thus, included 40 items that fall into eight categories that gauged teachers’ self-assessment of TPACK subscales on a 7-point Likert scale where: (1) strongly disagree, (2) disagree, (3) slightly disagree, (4) undecided, (5) slightly agree, (6) agree, and (7) strongly agree. In addition, the questionnaire included some demographic items asking responding teachers about their gender, age, highest academic degree, and years of experience in teaching EFL.

Table 1. TPACK Constructs.

| Constructs | Definitions | Example items |

| TK | knowledge of computer and ICT tools and how to operate them | I keep up with important new technologies. |

| PK | knowledge of methods of teaching and how to improve language learning outcomes | I am able to help my students to reflect on their language learning strategies. |

| PCK | knowledge of how to effectively convey EFL/ESL-related content matter to language learners | Without using technology, I know how to select effective teaching approaches to improve students’ language learning. |

| TCK | Knowledge of how to represent EFL/ESL-related content matter using technology | I am able to use technology to obtain more knowledge about English grammar, vocabulary, and pronunciation. |

| CK | knowledge about EFL/ESL-related content matter | I have sufficient knowledge about English grammar, vocabulary, and pronunciation. |

| TPK | knowledge of how to use technology to implement different teaching methods | I am able to use technology to introduce real world scenarios to my students. |

| TPACK | Knowledge of how to facilitate students’ learning through the use of appropriate pedagogy and technology | I can appropriately combine my knowledge of English, technology, and pedagogy to convey materials to language learners. |

| WCK | Knowledge of how to search the web to find EFL/ESL-related materials | I am able to click the hyperlink to connect to another Website. |

Participants

The TPACK survey was created in Google Forms and its link was emailed to 956 practicing Iranian EFL teachers who were instructing English courses in different language institutes across the country. The questionnaire was administered to the entire sample of cohorts in August 2017, and they were requested to submit the completed form within a month. Teachers were required to read each statement and to specify on the 7-point Likert scale to what extent they agreed with that statement. They were informed that their participation in the survey was quite voluntary, and that they could quit the process whenever they willed. A total number of 206 teachers, which constituted a return rate of 21.54 %, filled out the questionnaire and returned it to the researcher of the study. The sample consisted of 106 (51.5%) male and 100 (48.5%) female participants. The majority of participants, 173 (84%) were English majors. From this number 113 (54.9%) held an M.A degree, 27 (13.1%) held a Ph.D. degree, and 33 (16%) were B.A holders. The rest of the participants, 33 (16%), were non-English majors. The age of the participants ranged from 20 to 66, with an average of 32.79 years old. Participants’ work experience ranged from 1 to 33 years, with an average of 9.61 years. Accordingly, the sample was composed of rather experienced EFL teachers. The majority of the participants, 85%, expressed that they had taken some type of ICT course as part of their previous educational programs.

Data Analysis

After participants answered the items in the questionnaire, their responses were entered into SPSS 22. Before doing any statistical analysis, the status of the data in terms of normal distribution was investigated. To this end, measures of skewness and kurtosis for each subscale in the TPACK framework were calculated. The coefficient of skewness is a measure of the degree of symmetry in the variable distribution, and the coefficient of kurtosis indicates the degree of tailedness in distribution of variable (Westfall, 2014). Based on the results, the skewness coefficients ranged from -.68 to -1.56, suggesting that the scores were not skewed to a significant degree. In addition, Kurtosis values were between .78 and 3.59, which were indicative of a rather peaked distribution.

Construct validation of the initial questionnaire included both exploratory factor analysis (EFA) and confirmatory factor analysis (CFA). At the first step, responses were subjected to EFA. For this purpose, we followed the recommendations given by Costello and Osborne (2005), with Principal Axis Factoring as extraction method and Oblimin with Kaiser Normalization as the rotation method. Factors with eigenvalues greater than one and factor loadings greater than .40 were selected and retained for CFA phase. As Thorndike (2005) states EFA helps researchers to determine if questionnaire items cluster towards the factors they are designed to measure. On that account, items with low factor loadings or cross loadings were eliminated from the analysis. Another round of EFA was conducted for the remained items to make sure that there were no factors with low loading or cross loading. It should be noted that a cross loading item refers to a one that loads at .32 or higher on two or more factors (Tabachnick & Fidell 2001). To further test the model the factors emerged in EFA were subjected to confirmatory factor analysis (CFA), which was performed using AMOS 18 and based on recommendations offered by Hu and Bentler (1999). Finally, to establish the level of internal consistency of the resulting TPACK survey, the reliability analysis using Cronbach’s alpha method was conducted.

Results

According to Gorsuch (1983) the first step in conducting exploratory factor analysis is to examine the intercorrelation matrix to find out if it is factorable. Thus, the adequacy of the data (participants’ scores along TPACK subscales) for EFA was assessed through KMO and Bartlett’s test. Based on the results, the KMO measure of sampling adequacy was .87, which exceeds the recommended value of .6 (Kaiser, 1970, 1974). In addition, Bartlett’s test of sphericity reached statistical significance, which supports the factorability of the correlation matrix.

In the EFA phase of data analysis rotation converged in ten iterations and seven factors were yielded that together explained 67.57% of the cumulative variance of the data. Factors extracted accounted for 33.79 %, 8.78 %, 7.01 %, 5.54 %, 4.91 %, 4.05 %, and 3.46% of the variance of the responses respectively. Of the seven factors produced, six were the same original TPACK constructs proposed by Mishra and Koehler (2006). Additionally, items measuring participants’ web content knowledge (WCK) loaded on the same factor. But, technological content knowledge (TCK) did not emerge as a distinct factor. Two of the TCK items merged with TPACK factor, and the other one failed to load on any of the resulting factors. Six other items that belonged to other constructs were eliminated from the rest of the analysis because of low factor loadings. Hence, a 7-factor solution was accepted, along with 31 items that fell in the following categories: TK (5 items), PCK (2 items), PK (6 items), TPACK (6 items), TPK (4 items), CK (3 items), and WCK (5 items).

For investigating how well the resulting 7-factor model fitted the data, it was subjected to confirmatory factor analysis (CFA), which was performed using AMOS 18. AMOS yields various fit indices, but some measures are more commonly reported in studies involving analysis of covariance structures. χ2 is probably the most common such index that assesses the overall fit of the model and the discrepancy between the sample and the fitted covariance matrix (Kline, 2005). Since χ2 is greatly affected by the sample size (Bentler & Bonett, 1980, Hooper, Coughlan, & Mullen, 2008), researchers usually report the values of absolute and incremental fit indices in addition to this statistic. Absolute fit indices determine to what extent an a priori model fits the sample data (McDonald & Ho, 2002). Such indices do not compare the obtained model with a baseline model, but instead they measure how well the model fits in comparison to no model at all (Jöreskog & Sorbom, 1993). Incremental fit indices, on the other hand, do not use the chi-square in its raw form but compare the chi-square value to a baseline model (Hu & Bentler, 1999).

Two absolute fit indices reported in this study include Root Mean Square Error of Approximation (RMSEA) and Standardized Root Mean Square Residual (SRMR). RMSEA is a parsimony-based index, which is sensitive to the number of parameter estimates in the model. SRMR, on the other hand, shows the difference between the residuals of sample covariance matrix and the hypothesized model. Values between .08 and .10 for these two indices show poor model fit and values below .08 indicate good model fit (Hooper et al., 2008). These absolute fit indices were accompanied by two incremental indices, namely Comparative Fit Index (CFI) and Tucker–Lewis Index (TLI), which compare the fitness of a target model against an independent or null model. A cut-off value greater than .95 for these two indices are indicative of good model fit (Hu & Bentler 1999).

The absolute fit indices calculated for the model are as follows: χ2 = 946.027 (df = 585, p < .001), χ2/df = 1.61, SRMR = .072, and RMSEA = .041. Besides, the incremental goodness-of-fit indices were calculated as follows: comparative fit index (CFI) = .072 and TLI = .926. All these statistics indicate that the 7-factor solution represents a good fit between the model and the data. Table 2 presents the factor loadings of the items in the validated questionnaire. Having established the validity of the instrument, we calculated its reliability following the method suggested by De Vaus (2002). According to Pallant (2010) and Stangor (2006) reliability values above .70 suggest very good internal consistency among the items within a questionnaire. Therefore, the TPACK subscales in the survey instrument we developed had acceptable level of reliability (See table 3). The items incorporated in the final survey instrument can be seen in appendix I.

Table 2. Pattern Matrix of the TPACK Questionnaire (N = 206).

| Factors | |||||||

| I | II | III | IV | V | VI | VII | |

| Items | TPACK | PK | WCK | TPK | TK | CK | PCK |

| TPCK2 | .75 | ||||||

| TPCK4 | .70 | ||||||

| TPCK3 | .70 | ||||||

| TPCK1 | .68 | ||||||

| TPCK5 | .67 | ||||||

| TPCK6 | .60 | ||||||

| PK4 | .74 | ||||||

| PK5 | .73 | ||||||

| PK6 | .73 | ||||||

| PK3 | .71 | ||||||

| PK2 | .65 | ||||||

| PK1 | .58 | ||||||

| WCK2 | .83 | ||||||

| WCK4 | .79 | ||||||

| WCK5 | .77 | ||||||

| WCK3 | .70 | ||||||

| WCK1 | .63 | ||||||

| TPK3 | .74 | ||||||

| TPK4 | .64 | ||||||

| TPK2 | .63 | ||||||

| TPK1 | .54 | ||||||

| TK4 | .46 | ||||||

| TK5 | .41 | ||||||

| TK3 | .78 | ||||||

| TK2 | .77 | ||||||

| TK1 | .74 | ||||||

| CK1 | .83 | ||||||

| CK2 | .81 | ||||||

| CK3 | .75 | ||||||

| PCK2 | .93 | ||||||

| PCK1 | .92 | ||||||

Table 3. Descriptive Statistics and reliability coefficients for each subscale (N = 206).

| Subscale | Number of items | Mean | SD | alpha |

| TPACK | 6 | 5.53 | .96 | .86 |

| PK | 6 | 5.83 | .72 | .84 |

| TPK | 4 | 5.55 | .93 | .88 |

| TK | 5 | 5.31 | .92 | .75 |

| CK | 3 | 6.19 | .68 | .83 |

| PCK | 2 | 5.56 | 1.20 | .86 |

| WCK | 5 | 5.40 | .62 | .91 |

Discussion

The primary purpose of the current study was to develop and validate a survey instrument for exploring Iranian EFL instructors’ TPACK perceptions. Results of the study revealed that a 31-item seven-factor instrument yielded an acceptable reproduction of TPACK framework, and as a consequence it was a valid and reliable tool to measure participants’ perceived knowledge level about TPACK. Responding teachers were able to distinguish six out of seven TPACK constructs, but they did not perceive TCK as an independent factor. The model obtained in this study produced more satisfactory results compared with those appearing in Archambault and Crippen (2009), Chai et al. (2011), and Koh et al. (2010). While the results of our study showed that participants could differentiate between CK, PK, TK, PCK, TPK, and TPACK, some previous researches had identified just four factors and even fewer. Archambault and Crippen (2009) found high correlations between TCK, TPK, and TPACK, and thus questioned the heuristic value of the model and its ability to predict outcomes or reveal new knowledge. Likewise, Koh et al. (2010) found out that Singaporean teachers distinguished just two TPACK constructs (TK and CK), but they did not perceive other TPACK constructs to be distinct domains.

Based on our findings, participants distinguished between overlapping constructs such as PCK, TPK, and TPACK mainly because we adapted items from a questionnaire that was based on Jonassen, Howland, Marra, & Crismond’s (2008) model called meaningful learning with technology. This framework places emphasis on the use of active and constructive learning to solve authentic problems in group settings, and hence the survey instruments grounded in it reflected the ways in which technology-enhanced language teaching is implemented in Iranian EFL settings. A number of previous studies (e.g., Aziznezhad & Hashemi, 2011; Ebrahimi, et al., 2016; Golshan & Tafazoli, 2014) point out that in Iran technology-assisted instruction is delivered though mediums such as Multimedia Messaging Service (MMS), podcasts, video games, and online discussion forums, all of which require EFL teachers to adopt constructivist pedagogical practices. Thus, this finding implies that when developing a TPACK-based instrument to survey teachers’ technology integration knowledge, researchers should craft or adapt items that are consistent with the specific instructional practices that responding teachers typically employ in their classes.

In addition to adopting items from a survey tool with constructive-oriented assumptions about learning, we excluded items that were neutral with respect to content matter and replaced them with new items that more accurately represented the nature of EFL/ESL courses. This procedure was another factor that may have contributed to the successful identification of six out of seven TPACK constructs by the Iranian EFL teachers. In recent years some concepts and practices have gained prominence in ELT community in Iran, and instructors of EFL subject matter attempt to comply with some unique features of current practices in foreign language instruction such as communicative approach to teaching, improving learners’ autonomy, and encouraging inquiry-based learning in classes. As Borg (2006) states, language teaching is not just about learning the content but also about developing communication-related skills in the learners. Accordingly, incorporating EFL specific concepts (task-based activities, language learning strategies, group activities) in items of the questionnaire helped respondents to better discriminate between different TPACK categories. This finding substantiates the fact that since the distinctive point about TPACK is its taking into account the contextually bound nature of teaching and learning with technology, any instrument created to evaluate teachers’ TPACK perceptions must address the way in which technology interacts with specific subject matter and context-specific pedagogy.

Despite the promising results of this study regarding the identification of TPACK constructs, both TCK items loaded on the factor containing TPACK items, so TCK failed to emerge as an independent construct. Apart from this, a number of TPK and TPACK items either did not load on their associated factors or had low factor loadings. This problem has been reported in a number pf previous studies as well. To cite an example, Archambault and Crippen (2009) found out that even expert teachers who were reviewing the items in a TPACK-based survey experienced confusion regarding whether some items belonged to TCK, TPK, or TPACK domains. These findings provide empirical support for the claim that the boundaries between some TPACK constructs are still blurry, and consequently more precise definitions for the four overlapping categories should be presented and the boundaries between them should be further clarified. Having argued that the definitions given by Mishra and Koehler (2006) focus mostly on the center of the constructs, Cox and Graham (2009) did a conceptual analysis to create a précising definition for each TPACK category to shed light on the characterization of borderline cases. Further research is needed to explore if this new approach to defining TPACK constructs can better address the complexity involved in isolating overlapping constructs.

One form of computer-based instruction that has generated a large number of potentialities to boost language teaching is web-based instruction (WBI). As Khan (2001) states WBI provides learners with a wide access to instructional resources that go beyond the facilities existing in the traditional classrooms and offers them opportunities for open, flexible, and distributed learning. One major flaw in previous TPACK instruments was their lacking a construct devoted to teaching with web technology. To fill this gap, we included a number of items measuring participants’ know-how with regards to teaching with the web and Internet in the questionnaire. Our findings indicated that all such items loaded on a distinct factor that was named Web Content Knowledge (WCK). The emergence of a novel construct such as WCK confirms that responding teachers were able to differentiate between two different types of knowledge of technology (TK). As Koehler and Mishra (2008) argue teachers’ ability to distinguish between different forms of technological knowledge necessitates crafting TK items that are in line with specific pedagogical tasks that intended audience of a research study carry out in their classes. For example, if a group of EFL teachers are required to prepare software for an online course, then TK items should address participants’ familiarity with web-based environments such as cognitive flexibility hypertexts (CFTs), user-generated metadata, and social bookmarking.

The present study suffered from some limitations. The first limitation was that despite our efforts, we could not gain the approval of the directors of local language institutes to administer a paper-based version of the TPACK survey instrument among EFL teachers who did not have access to technological devices, with the consequence being that only teachers who received the questionnaire through electronic mail were able to respond to this self-assessment survey. Since this might have created a bias in responses to the items related to the use of technology, we suggest that in future studies the arrangements for data collection should be made in such a way that the questionnaire is administered to EFL teachers via both online and in-person methods. Second, considering the large number of EFL teachers in Iran who work in public high schools and private language centers, our sample consisted of a rather limited number of teachers. As a result, we had to conduct both EFA and CFA on the same sample of participants. We recommend that in future studies the sample be split into two subsamples. In that case, the model generated from the first subsample can be validated using the second independent subsample. Lastly, we propose that researchers of later studies craft TPACK items based on definitions proposed by Cox and Graham (2009) or the new contextualized model introduced by Jang and Tsai (2013) to see if they enable teachers to discriminate between all overlapping constructs.

Conclusion and Implications

The present study contributes to extant research on teachers’ technology integration by creating a valid and reliable instrument that can be employed to explore the perceived knowledge level of EFL teachers’ with respect to different domains in TPACK framework. Our findings lend support to the idea that the key to designing acceptable TPACK-based surveys is the inclusion of subject-matter specific, rather than general or broad items. In our study, the subject-neutral items in previous questionnaires were excluded and replaced by items that were crafted from the perspective of EFL, with the consequence being that responding teachers were able to distinguish between overlapping TPACK constructs. Moreover, to overcome a deficiency in previous TPACK studies, a number of items relevant to teaching with the web were added to the questionnaire. The results of factor analyses indicated that contrary to the assertion made by Archambault and Crippen (2009) there are at least six distinctive domains in TPACK framework, and hence it is a useful measure to describe teachers’ perceptions about their technology literacy. We recommend that TPACK instrument developed in this study can be further examined, revised, and employed in both EFL and other educational settings.

Additionally TPACK can be utilized not only as a basis for constructing survey tools, but it can be regarded as a basis for designing ICT courses for language teachers as well. To this end, policymakers responsible for teacher education and professional development courses can reexamine the extant curricula developed to enhance Iranian teachers’ ICT literacy using TPACK-based programs. The instructional process named as PT3 project carried out by Pierson (2001) demonstrates how a pedagogically robust program can strengthen university faculty’s knowledge base of TPACK. Likewise, Koh and Divaharan (2011) successfully implemented an instructional plan that enhanced teachers’ acceptance of technology proficiency through engaging them in design projects. We believe that introducing similar TPACK-based pedagogical interventions will positively influence the instructional practices of Iranian EFL instructors and this procedure in turn will improve language learning outcomes of Iranian language learners.

About the Author

Mohammad Bagheri holds an MA degree in applied linguistics from University of Tehran, Iran. His main research interests include second language acquisition, psycholinguistics, and quantitative research methods in education. ORCID ID: 0009-0008-6920-9925

References

Angeli, C., & Valanides, N. (2005). Preservice teachers as ICT designers: An instructional design model based on an expanded view of pedagogical content knowledge. Journal of Computer-Assisted Learning, 21(4), 292–302.

Angeli, C., & Valanides, N. (2009). Epistemological and methodological issues for the conceptualization, development, and assessment of ICT-TPCK: Advances in Technological Pedagogical Content Knowledge (TPCK). Computers & Education, 52(1), 154-168.

Archambault, L. M., & Barnett, J. H. (2010). Revisiting technological pedagogical content knowledge: exploring the TPACK framework. Computers & Education, 55(4), 1656–1662.

Archambault, L., & Crippen, K. (2009). Examining TPACK among K-12 online distance educators in the United States. Contemporary Issues in Technology and Teacher Education, 9(1), 71–88.

Aziznezhad, H., & Hashemi, M. (2011). Technology as a medium for applying constructivist teaching methods and inspiring kids. Procedia- Social and Behavioral Sciences, 28, 862-866.

Bentler, P. M., & Bonett, D. G. (1980). Significance tests and goodness of fit in the analysis of covariance structures. Psychological Bulletin, 88(3), 588–606.

Borg, S. (2006). Teacher cognition and language education: Research and practice. Continuum.

Chai, C. S., Koh, J. H. L., & Tsai, C. C. (2011). Exploring the factor structure of the constructs of technological, pedagogical, content knowledge (TPACK). Asia-Pacific Education Researcher, 20(3), 595–603.

Chapelle, C. A. (2008). Technology and second language acquisition. Annual Review of Applied Linguistics, 27, 98- 114.

Cheung, C. K., & Slavin, R. E. (2012). How features of educational technology applications affect student reading outcomes: A meta-analysis. Educational Research Review, 7(3), 198-215.

Cochran, K. F., DeRuiter, J. A., & King, R. A. (1993). Pedagogical content knowing: An integrative model for teacher preparation. Journal of Teacher Education, 44(4), 263-272.

Costello, A. B., & Osborne, J. W. (2005). Exploratory Factor Analysis: Four recommendations for getting the most from your analysis. Practical Assessment, Research, and Evaluation, 10(7), 1-9.

Cox, S., & Graham, C. R. (2009). Diagramming TPACK in practice: Using an elaborated model of the TPACK framework to analyze and depict teacher knowledge. TechTrends, 53(5), 60–69.

Dashtestani, R. (2016). Moving bravely towards mobile learning: Iranian students’ use of mobile devices for learning English as a foreign language, Computer Assisted Language Learning, 29(4), 815-832.

De Vaus, D.A. (2002). Surveys in social research. Allen & Unwin.

Dillman, D. A. (2007). Mail and internet surveys: The tailored design method (2nd ed.). John Wiley & Sons Inc.

Doughty, C. J., & Long, M. H. (2003). Optimal psycholinguistic environments for distance foreign language learning. Language Learning & Technology, 7(3), 50–80.

Ebrahimi, A., Faghih, E., & Marandi, S. S. (2016). Factors affecting pre-service teachers’ participation in asynchronous discussion: The case of Iran. Australasian Journal of Educational Technology, 32(2), 115-129.

Fatemi Jahrommi, A. & Salimi, F. (2011). Exploring the human element of computer-assisted language learning: An Iranian context. Computer-assisted language learning, 26(2), 1-19.

Franklin, C. (2004). Teacher Preparation as a Critical Factor in Elementary Teachers: Use of Computers. In R. Ferdig, C. Crawford, R. Carlsen, N. Davis, J. Price, R. Weber & D. Willis (Eds.), Proceedings of SITE 2004–Society for Information Technology & Teacher Education International Conference (pp. 4994-4999). Association for the Advancement of Computing in Education (AACE).

Golshan, N. & Tafazoli, D. (2014). Technology-enhanced language learning tools in Iranian EFL context: Frequencies, attitudes and challenges. Procedia – Social and Behavioral Science, 136, 114-118.

Gorsuch, R. L. (1983). Factor analysis. Lawrence Erlbaum.

Grossman, P. L. (1990). The making of a teacher: Teacher knowledge and teacher education. Teachers College Press.

Gunter, G., & Baumbach, D. (2004). Curriculum integration. In A. Kovalchick & K. Dawson (Eds.), Education and technology: An encyclopedia. ABC-CLIO, Inc.

Harris, J.B. & Hofer, M.J. (2011). Technological pedagogical content knowledge (TPACK) in action: A descriptive study of secondary teachers’ curriculum-based, technology-related instructional planning. Journal of Research on Technology in Education, 43(3), 211-229.

Hedayati, H. F., & Marandi, S. S. (2014). Iranian EFL teachers’ perceptions of the difficulties of implementing CALL. ReCALL, 26(3), 298-314.

Hooper, D., Coughlan, J., & Mullen, M. R. (2008). Structural equation modeling: Guidelines for determining model fit. Electronic Journal of Business Research Methods, 6, 53–6.

Hu, L., & Bentler, P. M. (1999). Cutoff criteria for fit indexes in covariance structure analysis: Conventional criteria versus new alternatives. Structural Equation Modeling, 6, 1-55.

Irving, K. E. (2006). The impact of technology on the 21st century classroom. In J. Rhoton & P. Shane (Eds.), Teaching science in the 21st century (pp. 3-20). National Science Teachers Association Press.

Jang, S. J., & Tsai, M.F. (2013). Exploring the TPACK of Taiwanese secondary school science teachers using a new contextualized TPACK model. Australasian Journal of Educational Technology, 29(4), 566-58.

Jonassen, D., Howland, J., Marra, R., & Crismond, D. (2008). Meaningful learning with technology (3rd ed.). Pearson.

Jöreskog, K., & Sörbom, D. (1993). LISREL 8: User’s reference guide. Scientific Software International.

Kaiser, H. F. (1970). A second generation little jiffy. Psychometrika, 35(4), 401-415.

Kaiser, H. F. (1974). An index of factorial simplicity. Psychometrika, 39, 31–36.

Karimi, M. N. (2014). EFL students’ grammar achievement in a hypermedia context: Exploring the role of Internet-specific personal epistemology. System, 42(1), 1-11.

Khan, B. H. (2001). A framework for Web-based learning. In B. H. Khan (Ed.), Web-based training. Educational Technology Publications.

Kline, R. (2005). Principles and practices of structural equation modeling (2n ed.). Guilford Press.

Koehler, M. J., & Mishra, P. (2005). What happens when teachers design educational technology? The development of technological pedagogical content knowledge. Journal of Educational Computing Research, 32(2), 131-152.

Koehler, M. J., & Mishra, P. (2008). Introducing TPCK. AACTE Committee on Innovation and Technology (Ed.), The handbook of technological pedagogical content knowledge (TPCK) for educators (pp. 3–29). Lawrence Erlbaum Associates.

Koh, J.H.L. & Sing, C.C. (2011). Modeling pre-service teachers’ technological pedagogical content knowledge (TPACK) perceptions: The influence of demographic factors and TPACK constructs. In Proceedings of ASCILITE – Australian Society for Computers in Learning in Tertiary Education Annual Conference 2011 (pp. 735-746). Australasian Society for Computers in Learning in Tertiary Education.

Koh, J. H. L., Chai, C. S., & Tsai, C. C. (2010). Examining the technology pedagogical content knowledge of Singapore pre-service teachers with a large-scale survey. Journal of Computer Assisted Learning, 26(6), 563–573.

Koh, J. L., & Divaharan, S. (2011). Developing pre-service teachers’ technology integration expertise through the TPACK developing instructional model. Journal of Educational Computing Research, 44(1), 35-58.

Kramarski, B., & Michalsky, T. (2009). Three metacognitive approaches to training pre-service teachers in different learning phases of technological pedagogical content knowledge. Educational Research and Evaluation, 15(5), 465-485.

Laakkonen, I. (2011). Personal learning environments in higher education language courses: An informal and learner-centered approach. In S. Thouësny & L. Bradley (Eds.), Second language teaching and learning with technology: Views of emergent researchers (pp. 9-28). Research-publishing.net.

Lee, M. H., & Tsai, C. C. (2010). Exploring teachers’ perceived self-efficacy and technological pedagogical content knowledge with respect to educational use of the World Wide Web. Instructional Science, 38, 1–21.

Leinhardt, G., & Greeno, J. G. (1986). The cognitive skill of teaching. Journal of Educational Psychology, 78(2), 75-95.

Li, J., & Cumming, A. (2001). Word processing and ESL writing: A longitudinal case study. International Journal of English Studies, 1(2), 127-152.

Magnusson, S., Krajcik, J., & Borko, H. (1999). Nature, sources and development of pedagogical content knowledge for science teaching. In J. Gess-Newsome & N. G. Lederman (Eds.), Examining pedagogical content knowledge (pp. 95-132). Kluwer Academic Publisher.

McDonald, R. P., & Ho, M. H. R. (2002). Principles and practice in reporting structural equation analyses. Psychological Methods, 7(1), 64-82.

Meihami, H., Meihami, B., & Varmaghani, Z. (2013). The effect of Computer-assisted language learning on Iranian EFL students listening comprehension. International Letters of Social and Humanistic Sciences, 11, 57-65.

Mishra, P., & Koehler, M. J. (2006). Technological pedagogical content knowledge: A new framework for teacher knowledge. Teachers College Record, 108(6), 1017–1054.

Nami, F., Marandi, S., & Sotoudehnama, E. (2015). CALL teacher professional growth through lesson study practice: an investigation into EFL teachers’ perceptions. Computer Assisted Language Learning, 29(4), 658-682.

Pallant, J. (2010). SPSS survival manual. Mc Graw-Hill Education.

Pellettieri, J. (2000). Negotiation in cyberspace: The role of chatting in the development of grammatical competence. In M. Warschauer, & R. Kern, (Eds.), Network-based language teaching: Concepts and practice. Cambridge University Press.

Pierson, M. E. (2001). Technology integration practice as a function of pedagogical expertise. Journal of Research on Computing in Education, 33(4), 413–430.

Schmidt, D. A., Baran, E., Thompson, A. D., Mishra, P., Koehler, M. J., & Shin, T. S. (2009). Technological pedagogical content knowledge (TPACK): The development and validation of an assessment instrument for preservice teachers. Journal of Research on Technology in Education, 42(2), 123–149.

Shulman, L. S. (1986). Those who understand: Knowledge growth in teaching. Educational Researcher, 15(2), 4-14.

Stangor, C. (2006). Research methods for the behavioral sciences. Houghton Mifflin.

Stanley, G. (2013). Language Learning with Technology: Ideas for Integrating Technology in the Classroom. Cambridge University Press.

Tabachnick, B. G., & Fidell, L. S. (2001). Using multivariate statistics (4th ed.). Allyn and Bacon.

Taylor, R., & Gitsaki, C. (2004). Teaching WELL and loving IT. In S. Fotos & C. M. Browne (Eds.), New perspectives on CALL for the second/foreign language classroom (pp. 129- 145). Lawrence Erlbaum Associates.

Thorndike, R. M. (2005). Measurement and evaluation in psychology and education. Pearson Prentice Hall.

Warschauer, M. (1996). Computer-assisted language learning: An introduction. In S. Fotos (Ed.), Multimedia language teaching (pp. 3-20). Logos International.

Westfall, P. H. (2014). Kurtosis as peakedness, 1905–2014. The American Statistician, 68(3), 191–195.

Wilson, S. M., Shulman, L. S., & Richert. A. E. (1987). ‘150 different ways’ of knowing: Representations of knowledge in teaching. In Calderhead, J. (Ed.), Exploring teachers’ thinking (pp. 104-124). Cassell educational.

Appendix. TPACK Survey Items.

| Constructs | Items |

| Technological Knowledge (TK) | (1) I have the technical skills to use computers effectively. |

| (2) I am able to easily learn how to operate technological devices. | |

| (3) I am able to solve technical problems when I am using technological devices. | |

| (4) I keep myself updated about important new technologies. | |

| (5) I am able to create web pages. | |

| Content Knowledge (CK) | (1) I have sufficient knowledge of/about English language grammar, vocabulary, and pronunciation. |

| (2) I can speak and write in English fluently. | |

| (3) I am able to develop deeper understanding of/about English grammar, vocabulary, and pronunciation. | |

| Pedagogical Knowledge (PK) | (1) I am able to stretch my students’ thinking by engaging them in challenging language learning tasks. |

| (2) I am able to guide my students to adopt appropriate language learning strategies. | |

| (3) I am able to help my students to monitor the process of their own language learning. | |

| (4) I am able to help my students to reflect on their language learning strategies. | |

| (5) I am able to effectively implement task-based language teaching (TBLT) in my classes. | |

| (6) I am able to guide my students to discuss effectively during group work. | |

| Pedagogical content knowledge (PCK) | (1) Without using technology, I am able to select effective teaching approaches that help my student to develop their language skills. |

| (2) Without using technology, I am able to prepare curricular activities and lesson plans that will improve my students’ language skills. | |

| Technological pedagogical knowledge (TPK) | (1) I am able to use technology to introduce my students to real world scenarios. |

| (2) I am able to help my students to use technology to find more information about English language grammar, vocabulary, and pronunciation. | |

| (3) I am able to help my students to use technology to plan and monitor their own language learning. | |

| (4) I am able to encourage my students to use technology to construct different forms of knowledge representation. | |

| Technological pedagogical content knowledge (TPACK) | (1) I am able to appropriately combine my knowledge of EFL, technology and teaching methods to convey EFL -related content matter to my students. |

| (2) I am able to select technologies to use in my classroom that enhance what I teach, how I teach and what students learn. | |

| (3) I am able to use teaching strategies that combine my knowledge of EFL, knowledge of technology and knowledge of teaching methods that I learned about in teacher education program. | |

| (4) I am able to provide leadership in helping my colleagues to coordinate the use of content, technologies and teaching methods at my school and/or district. | |

| (5) I know about the technologies that I have to use to obtain more knowledge about English grammar, vocabulary, and pronunciation. | |

| (6)I am able to use appropriate technologies (e.g., multimedia resources, simulation) to present EFL-related content matter to my students. | |

| Web content knowledge (WCK) | (1) I am able to enrich the language courses I teach using the materials from the Web. |

| (2) I am able to find appropriate online resources that can be used in my language classes. | |

| (3) I am able to select proper content from Web resources for English classes I teach. | |

| (4) I am able to search related online materials for course content. | |

| (5) I am able to search for various materials on the Web to be integrated into course content. |

| Copyright rests with authors. Please cite TESL-EJ appropriately. Editor’s Note: The HTML version contains no page numbers. Please use the PDF version of this article for citations. |